Abstract

Long-horizon LLM agents require memory systems that remain accurate under fixed context budgets.

However, existing systems struggle with two persistent challenges in long-term dialogue:

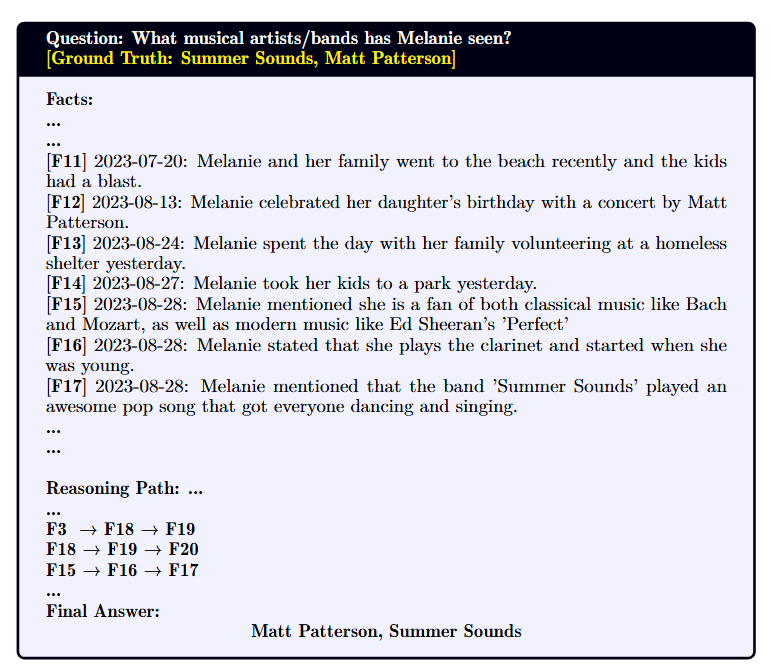

(i) disconnected evidence, where multi-hop answers require linking facts distributed across time,

and (ii) state updates, where evolving information (e.g., schedule changes) creates conflicts with older static logs.

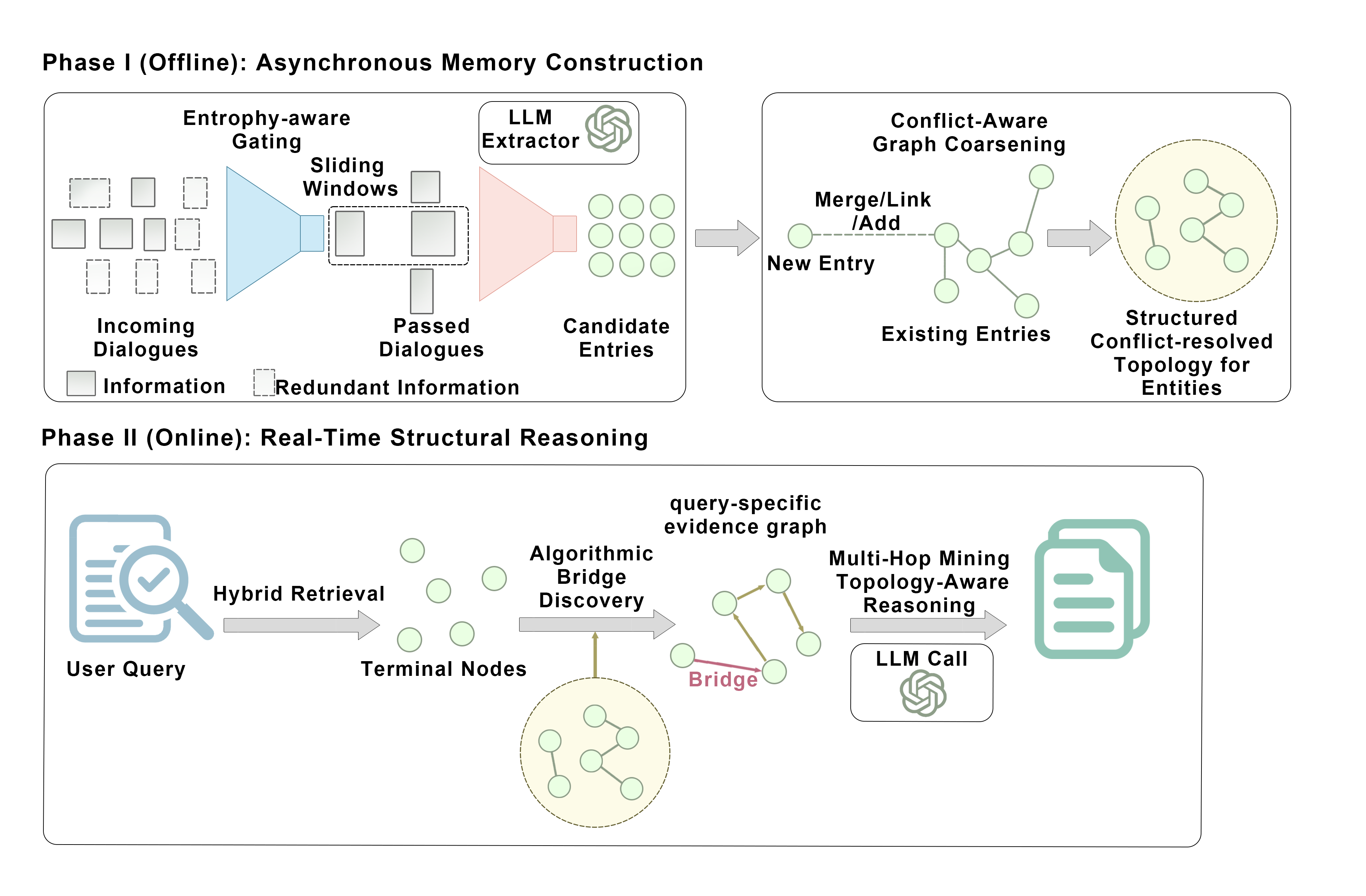

We propose AriadneMem, a structured memory system that addresses these failure modes via a decoupled two-phase pipeline.

In the offline construction phase, AriadneMem employs entropy-aware gating to filter noise and low-information message before LLM extraction and applies conflict-aware coarsening to merge static duplicates while preserving state transitions as temporal edges.

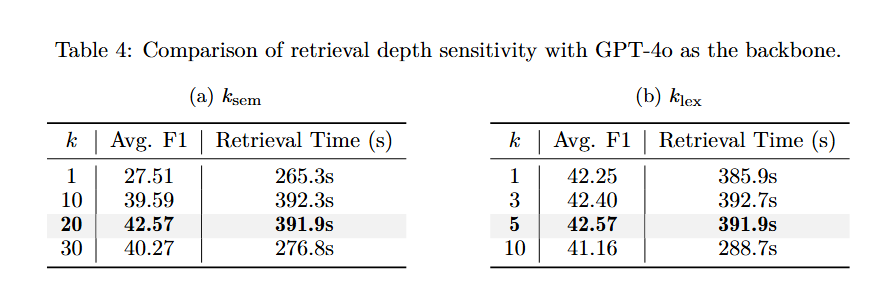

In the online reasoning phase, rather than relying on expensive iterative planning, AriadneMem executes algorithmic bridge discovery to reconstruct missing logical paths between retrieved facts, followed by single-call topology-aware synthesis.

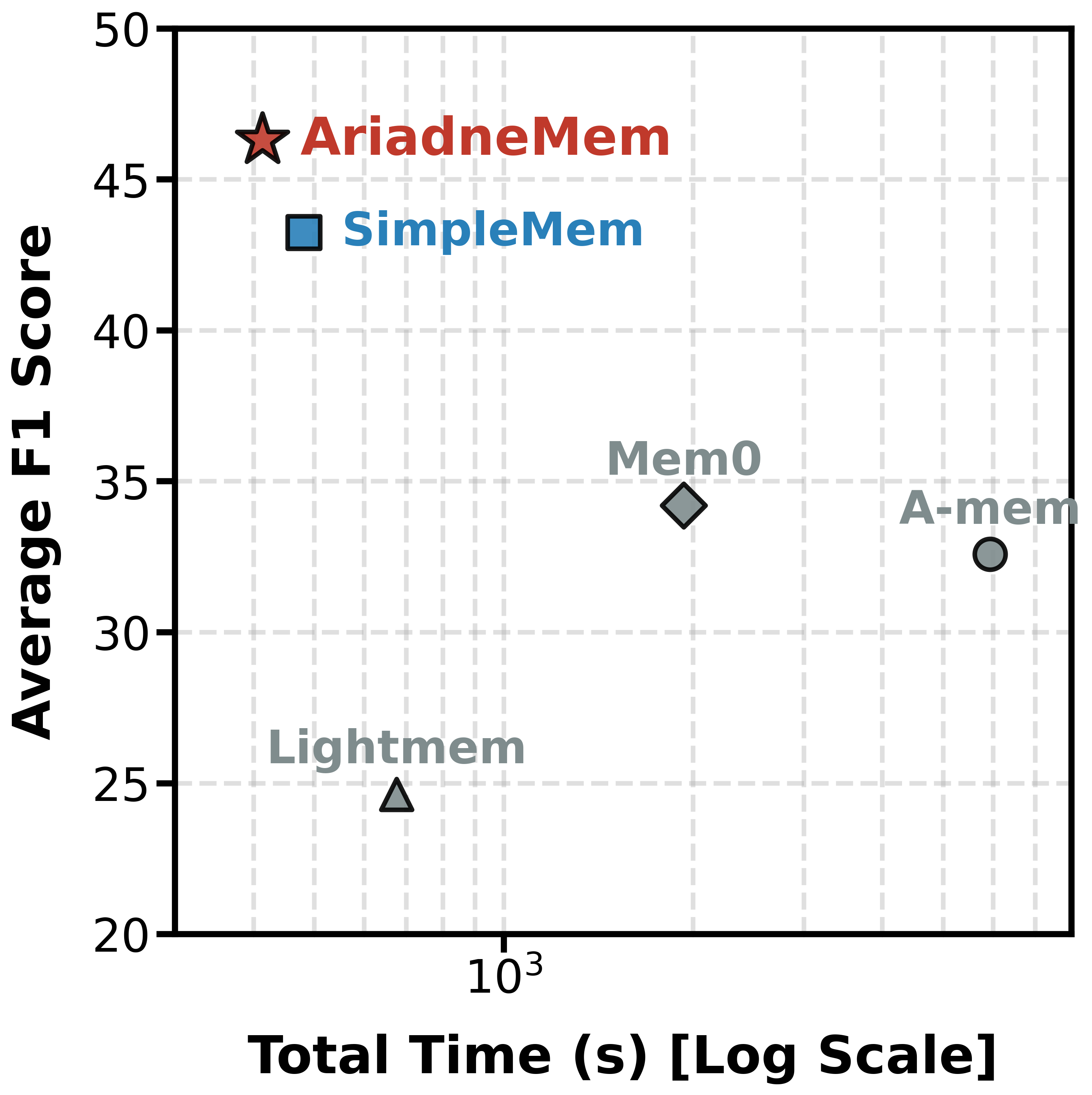

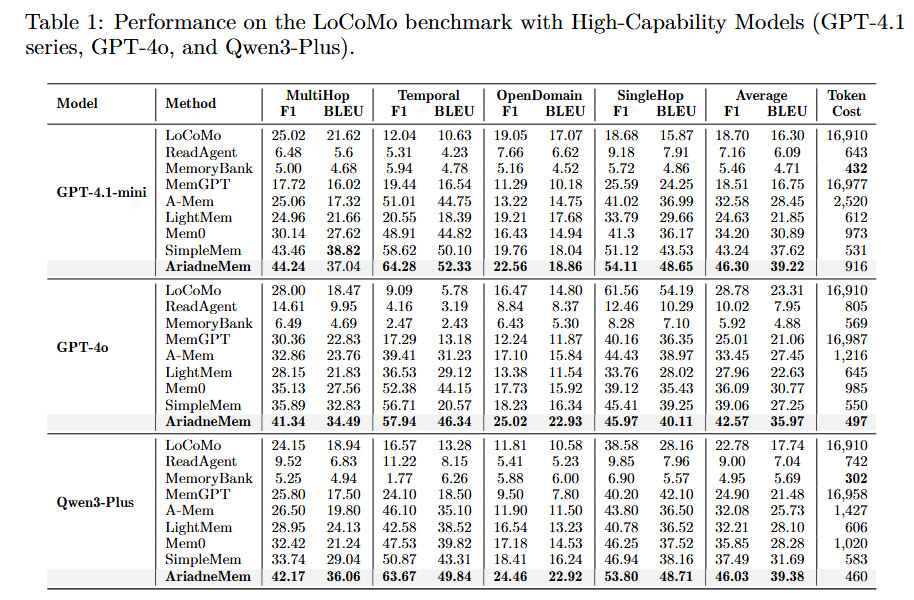

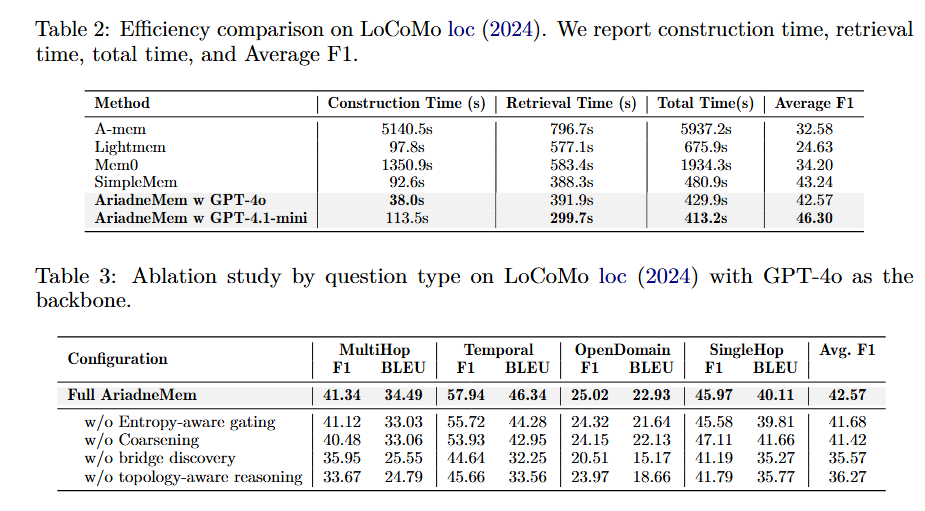

On LoCoMo experiments with GPT-4o, AriadneMem improves Multi-Hop F1 by 15.2% and Average F1 by 9.0% over strong baselines.

Crucially, by offloading reasoning to the graph layer, AriadneMem reduces total runtime by 77.8% using only 497 context tokens.

Methodology

Case Study

Experimental Results

Ablation Study

BibTeX

@misc{zhu2026ariadnememthreadingmazelifelong,

title={AriadneMem: Threading the Maze of Lifelong Memory for LLM Agents},

author={Wenhui Zhu and Xiwen Chen and Zhipeng Wang and Jingjing Wang and Xuanzhao Dong and Minzhou Huang and Rui Cai and Hejian Sang and Hao Wang and Peijie Qiu and Yueyue Deng and Prayag Tiwari and Brendan Hogan Rappazzo and Yalin Wang},

year={2026},

eprint={2603.03290},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2603.03290},

}